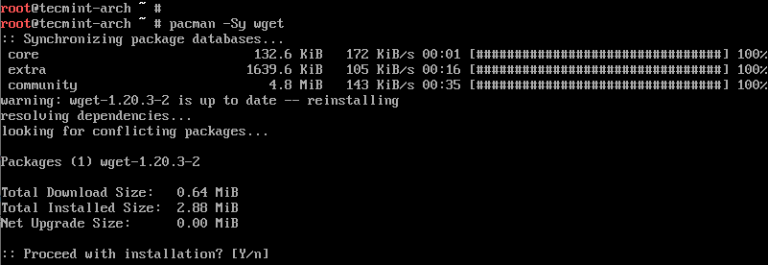

Specifies the directory that a file will be downloaded Resumes download of a partially downloaded file Optionsĭownloads a mirror copy of a website including all website filesĭownloads a file using a different file nameĭownloads a file in the background and frees up the terminalĭownloads files specified in URLs in a local or external file Here are some of the most commonly used wget command options. To run wget, simply type wget following option and URL from the terminal. The basic syntax of wget command: wget OPTIONS URL Your success will vary – some modern web pages don’t really work well when ripped out of their native habitat.Sudo zypper install wget How to run wget Command in Linux If you want to try and grab a whole webpage – including all images, styles, and scripts, you can use wget -p

A depth limit of 3 is defined in this example – meaning that if a folder is nested within 3 other folders, it won’t be downloaded: wget -r -l 3 ftps:///path/to/folder Downloading a Whole Directory including ALL Contents via FTP wget -m ftps:///path/to/folder Cloning a Whole Web Page Using Wget Download Directory Recursively via FTP with a Depth Limitĭownloading recursively will download the contents of a folder and the contents of the folders in that folder. If making POST requests, cURL can be more versatile. In this example, we’re sending two pieces of POST data – postcode and country. A blank string can be sent with –post-data: wget -post-data="postcode=2000&country=Australia" Make an HTTP POST request instead of the default GET request, and send data. Wait between downloads to reduce server load, and abort if the server fails to respond within 12 seconds: wget -w 6 -T 12 -i url-list.txt Download a File from an FTPS Server which Requires a Username and Passwordĭownload from an FTPS server with the username bob and the password boat: wget -user=bob -password=boat ftps:///file.zip Download a File with a POST Request Retry downloading a file and don’t’ print progress to the terminal: wget -t 5 -q Download From a List of Files, Waiting 6 Seconds Between Each Download, with a 12 Second Timeout You could also use -o to write out the log file, and it will overwrite rather than append an existing log file if it’s already there. If you have a text file containing a list of URLs to download, you can pass it directly to wget and write a log of the results for later inspection: wget -a log.txt -i url-list.txt If a download only partially completed, continue/resume downloading it with the -c option: wget -c Download From a List of Files, Appending to Log This includes such things as inlined images, sounds, and referenced stylesheets.Įxamples Download a File from an HTTPS Serverĭownload a single file, basic usage: wget Continue Downloading a File This option causes Wget to download all the files that are necessary to properly display a given HTML page. This option turns on recursion and time-stamping, sets infinite recursion depth and keeps FTP directory listings. Specify recursion maximum depth level depth. string should be in the format “key1=value1&key2=value2”

Make a POST request instead of GET and send data. Set the HTTP or FTP authentication password. Set the HTTP or FTP authentication username. Wait the specified number of seconds between the retrievals. Set the network timeout to seconds seconds. If this function is used, no URLs need be present on the command line.Ĭontinue getting a partially-downloaded file. If logfile does not exist, a new file is created. This is the same as -o, only it appends to logfile instead of overwriting the old log file. The messages are normally reported to standard error.Īppend to logfile. Here are the wget options, straight from the docs: Common Options

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed